Recipe: Data analysis with project-state

April 28, 2026recipedata-analysistutorialmilestonesdecision-log

# Recipe: Data analysis with project-state

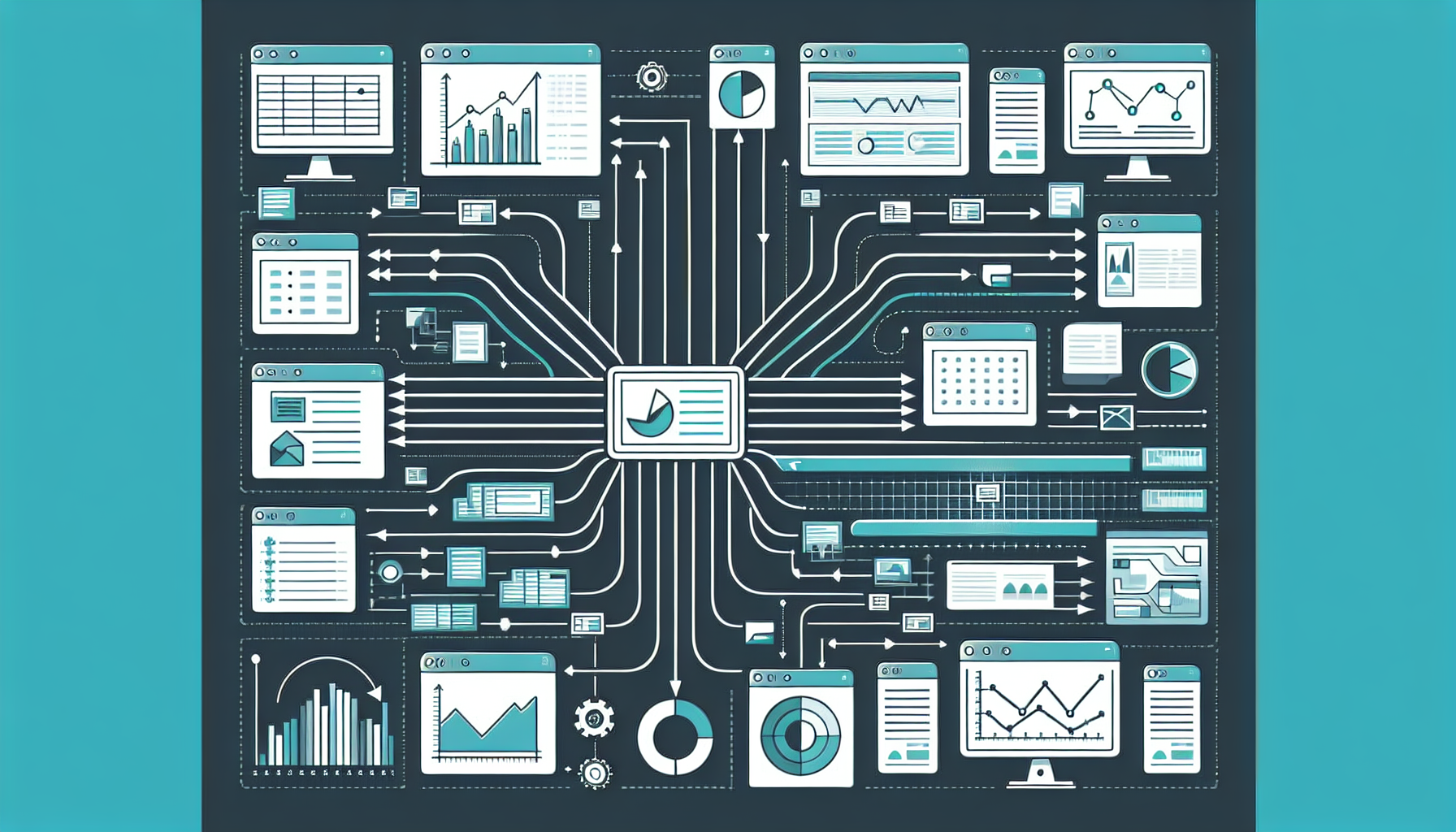

Data analysis projects have a shape that most PM tools handle badly. The work isn't a linear task list — it's an iterative cycle of data acquisition, cleaning, exploration, modelling, and insight delivery, with multiple stakeholders who want different things from the same analysis. project-state handles this well because it's structured around milestones and stakeholder reporting, not task boards.

Here's how to adapt it for a data analysis engagement.

## How data analysis maps to project-state concepts

| Data analysis concept | project-state concept |

|---|---|

| Analysis phases (acquire, clean, explore, model, deliver) | Phase preset |

| Dataset versions, model iterations | Milestones + technical_progress notes |

| Client, analyst team, exec sponsor | Stakeholder groups |

| Weekly analysis brief, final report | Reporting matrix entries |

| Scope change (new data source, new question) | Change register |

| Key analytical decisions (model choice, exclusion logic) | Decision log |

| Published findings, methodology notes | Document index |

## Step 1: Scaffold with a custom phase preset

```

ask claude: "scaffold a new v2 project, kind: research, phases: data-acquisition, data-cleaning, exploratory-analysis, modelling, insight-delivery"

```

Define gate criteria for each phase:

```yaml

phases:

- name: data-acquisition

gate_criteria:

- all source datasets received and stored

- data dictionary documented

- access permissions confirmed for all team members

- name: data-cleaning

gate_criteria:

- null/missing value audit complete

- outlier policy documented and applied

- cleaning log committed to project docs

- clean dataset version locked (document index entry: status=approved)

- name: exploratory-analysis

gate_criteria:

- EDA summary document approved by analyst lead

- key hypotheses documented as decisions

- at least one stakeholder review of preliminary findings

- name: modelling

gate_criteria:

- model selection decision logged

- validation approach documented

- baseline model milestone complete

- name: insight-delivery

gate_criteria:

- final report milestone complete

- client review meeting conducted

- all deliverables in document index (status=delivered)

```

These gate criteria become the checklist the agent evaluates when you ask "can we advance the phase?"

## Step 2: Set up stakeholders and the reporting matrix

A typical data analysis project has three stakeholder groups:

**Analyst team** — the people doing the work. They need internal status: what's blocked, what decisions are pending, what the current model state is.

**Client / sponsor** — the people who commissioned the analysis. They need progress updates and access to the findings as they emerge.

**Exec / decision-maker** — the end consumer of insights. They need a clean, concise view of findings and recommendations, not methodology.

```yaml

entries:

- stakeholder_group: analyst_team

report_type: internal_status

cadence: weekly

format: slack_message

surface: slack

channel: "#analysis-[project-name]"

- stakeholder_group: client

report_type: progress_update

cadence: biweekly

format: email_draft

surface: gmail

- stakeholder_group: exec_sponsor

report_type: findings_brief

cadence: on_milestone

trigger_milestones: ["eda-complete", "modelling-complete", "final-report"]

format: email_draft

surface: gmail

```

The `on_milestone` cadence is key here — the exec sponsor doesn't need weekly noise, just signal when something significant lands.

## Step 3: Define milestones around analytical outputs, not tasks

Milestones in data analysis should be analytical outputs, not work activities. "Clean dataset" not "clean the data". "EDA complete" not "run exploratory analysis".

```

ask claude: "add milestones:

- Clean dataset v1, due [date], owner: data engineer, definition of done: clean dataset file versioned and documented in project docs

- EDA summary, due [date], owner: lead analyst, definition of done: EDA document approved by team

- Baseline model, due [date], owner: ML engineer, definition of done: baseline results documented with evaluation metrics

- Model v1, due [date], owner: ML engineer, definition of done: model validated, assumptions documented

- Final report, due [date], owner: project lead, definition of done: report delivered and accepted by client"

```

The `technical_progress` note on each milestone is where the analytical narrative lives:

```

ask claude: "update milestone clean-dataset-v1: 70% complete, technical progress: missing value treatment complete for main tables, working on date normalization across three source systems which have inconsistent timezone handling"

```

This note goes directly into the next status report. The client doesn't see the detail — but the analyst team brief does.

## Step 4: Log analytical decisions

Data analysis is full of decisions that need to be traceable: why a particular exclusion criterion was applied, why one model was chosen over another, why an outlier was treated a certain way. Log them as they happen:

```

ask claude: "log a decision: excluding records with NULL in [field] rather than imputing, rationale: imputation would introduce systematic bias in the low-income cohort, decided by: analyst team, date: today"

```

```

ask claude: "log a decision: using XGBoost rather than logistic regression, rationale: non-linear interactions between [var1] and [var2] were significant in EDA, decided by: ML lead, approved by: client"

```

When the client asks "why did you exclude those records?" three months later, the decision is in the log with full rationale, not lost in a Slack thread.

## Step 5: Use the change register for scope changes

Scope changes in data analysis are common and dangerous. A new data source mid-project. A new question the client wants answered. A change in the target variable definition. These are material changes that need to be logged and approved.

```

ask claude: "log a change: client wants to add [new_datasource] to the analysis pipeline, classify it"

```

The change register classifies it (material — this expands scope and timeline) and creates a change record. The next status report to the client mentions it as a pending change request. Nothing moves until the change is approved and logged.

## Step 6: Deliver findings through the document index

As deliverables are produced — EDA summaries, model documentation, final reports — register them in the document index:

```

ask claude: "add document: EDA Summary v1.2, type: analytical-report, file: docs/eda-summary-v1.2.pdf, status: under-review, description: exploratory analysis covering [scope], author: [name]"

```

The document index tracks the approval lifecycle: `draft` → `under-review` → `approved` → `delivered`. Phase gate criteria can check document status — "can't advance to modelling until EDA Summary is approved."

## The result

A data analysis project running on project-state has:

- Full decision traceability from day one

- Automatic status reports that don't require manual preparation

- Phase gates that enforce analytical rigor before advancing

- A change register that catches scope creep

- Stakeholder-appropriate reporting: analyst brief, client update, exec findings brief

- A document index that tracks every deliverable through its approval lifecycle

The analyst team focuses on the analysis. The system handles the reporting.